“The Signal” is a quarterly publication that is publicly available with archived editions available to SAA Members. If you have information or suggestions for the newsletter, please send an email to SAA Communications Committee Chair – R. Ross MacLean at ross[dot]maclean[at]yale[dot]edu.

Follow us on BlueSky ![]() @ambulatory-assessment.org

@ambulatory-assessment.org

Join us on LinkedIn  https://www.linkedin.com/company/society-for-ambulatory-assessment/

https://www.linkedin.com/company/society-for-ambulatory-assessment/

Conference Information

Preparation underway for SAA 2026 in Vienna!

The SAA 2026 conference will be held August 3rd through 5th at the University of Vienna. The Health Psychology Group and the Ecological Momentary Assessment lab are busy organizing the conference agenda and preparing to welcome you to Vienna this summer. Two keynote speakers have been announced: Prof. Dr. Brenda Penninx and Prof. Dr. Eiko Fried. As in the previous years, the Society for Ambulatory Assessment will award the SAA Early Career Award at the SAA conference in Vienna 2026. The awardee will provide the third keynote speech for the conference. This award recognizes an outstanding early career researcher who has made an important contribution to the field of ambulatory assessment and who is able to communicate this contribution in a broader, integrative, and forward-looking manner in a keynote presentation. For more information, see the Community Information section below.

Important upcoming dates:

- Acceptance notifications and start of registration: end of March 2026

- End of early bird registration: end of April 2026

Welcome to the Main Building of the University of Vienna

SAA 2026 will take place in the main building of the University of Vienna. The main building of the University of Vienna on Vienna’s Ringstrasse was designed by architect Heinrich von Ferstel in the historicist style and opened in 1884. In addition to the university administration, it houses the university library, several institutes and administrative facilities (the research and teaching facilities of the University of Vienna are spread over more than 60 locations). Thanks to its location in the historic center of Vienna and its easy accessibility, the main building is a popular venue for national and international congresses. More than 25 lecture halls with modern technical equipment are available for events of all kinds. The centrally located event area on the second floor with the large ballroom, small ballroom and senate hall offers an impressive ambience with its anterooms. Honors, receptions and similar prestigious occasions as well as lectures and specialist exhibitions of various sizes find an extremely dignified setting here. The barrier-free auditorium with a modern information center leads to the leafy arcade courtyard, where a cafeteria invites visitors to relax in the summer months. Busts and statues in the arcades commemorate famous university teachers.

Hybrid conference

SAA 2026 will be organized as a hybrid event. Online participants will be able to join the keynotes and oral sessions including symposia and interact with the presenters on-site. Online participants will also be able to present live in oral sessions and symposia. Please note that there will be no online poster sessions. Online participants presenting a poster will be able to upload the poster to an online platform and view the other posters through the same platform.

SAA Spotlight

EMA-CleanR: A new tool to visualize and clean EMA/ESM data

For this month’s SAA Spotlight, Haijing Hallenbeck, PhD (SAA Communications Committee) spoke with Sarah Sperry, PhD about EMA-CleanR, a new program to assist with the cleaning and organization of intensive longitudinal data. To learn more about the program and access R code for EMA-CleanR click here.

Haijing Hallenbeck: What was the inspiration behind this project?

Sarah Sperry: The inspiration was two-fold. First, I was tired of writing and re-writing scripts to clean EMA/ESM data across my various studies and decided we needed an adaptable centralized pre-processing pipeline that would do a quality assessment of the data in the same way for each project. Second, I realized that a tool like this could help standardize the pre-processing and reporting of data quality of EMA/ESM studies outside of my own lab across academia. While there are several papers at this point that outline what information be should be presented in a paper using EMA/ESM data, there aren’t as many resources about how to actually get that information in a systematic way! I do want to recognize prior work of other labs who have also produced such tools that are available (e.g., Revol and colleagues [2024], Bringmann and colleagues [2021]). Each of our programs has unique things to offer and I would say different levels of proficiency needed to implement it.

Sarah Sperry, PhD (left) and her graduate student, Victoria Murphy (right), who is a co-author on the program

HH: What was the process like working on this project?

SS: It was fun! It first started with a brainstorm on what to include in the pipeline – including visuals representing various aspects queried in the pipeline. Then it came time to write code! Admittedly, I am not the world’s best coder despite enjoying the exercise (nor have I ever written code to be consumed by others). So, I wrote the initial code how I understand it best and then my amazing trainee Victoria Murphy reviewed my work and made many improvements. Lastly, our collaborator Gabriel Mongefranco, the data architect from the Eisenberg Family Depression Center at the University of Michigan, took the code and made it more accessible to the public – he took out my hard codes (whoops), wrote informational instructions on how to adapt the code, and went through the steps of publishing it so that it was accessible to the public.

HH: How did you select the different data processing/cleaning/visualization steps to include?

SS: It was influenced by many things including:

- The best practices I learned for EMA/ESM data from my graduate school mentor, Tom Kwapil, PhD

- Recommendations for reporting by Fritz and colleagues (2024), Rintala and colleagues (2019), and Trull & Ebner-Priemer (2020).

- Recommendations regarding careless responding in EMA by Jaso and colleagues (2022).

- My own experiences with munging EMA/ESM data, what variables are important to look at for my studies, and recommendations from my trainee, Victoria Murphy

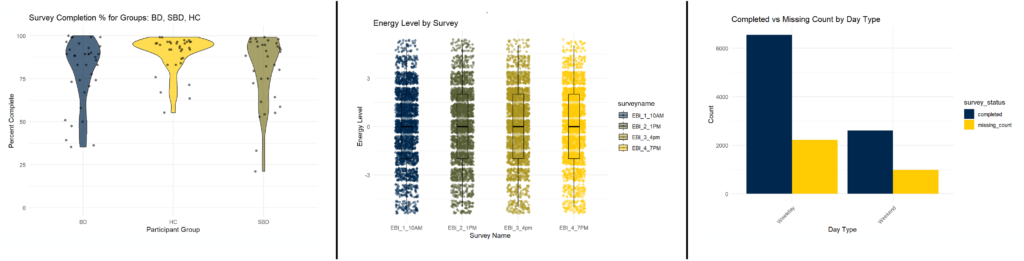

Images from an example output of EMA-CleanR

HH: What level of R proficiency is recommended for using this tool?

SS: I would say intermediate. One of the things I’ve noticed with a lot of open access R code is that it requires a higher level of proficiency. I wrote this hoping it would be accessible and understandable to those with intermediate levels of proficiency so they wouldn’t be scratching their head or pulling out their hair. Hopefully, we succeeded!

HH: What advice would you give to researchers starting out with EMA data analysis?

SS: EMA/ESM data is incredibly rich, dimensional, and dynamic. This is exciting! But, the results we draw from this data can be influenced by careless responding, missingness, and the assumptions and distributions of the data – so – don’t skimp on pre-processing and quality assessment!

References cited in the interview:

Revol, J., Carlier, C., Lafit, G., Verhees, M., Sels, L., & Ceulemans, E. (2024). Preprocessing experience-sampling-method data: A step-by-step framework, tutorial website, R package, and reporting templates. Advances in Methods and Practices in Psychological Science, 7(4), 25152459241256609.

Bringmann, L. F., van der Veen, D. C., Wichers, M., Riese, H., & Stulp, G. (2021). ESMvis: a tool for visualizing individual Experience Sampling Method (ESM) data: LF Bringmann et al. Quality of Life Research, 30(11), 3179-3188.

Fritz, J., Piccirillo, M. L., Cohen, Z. D., Frumkin, M., Kirtley, O., Moeller, J., … & Bringmann, L. F. (2024). So you want to do ESM? 10 essential topics for implementing the experience-sampling method. Advances in Methods and Practices in Psychological Science, 7(3), 25152459241267912.

Rintala, A., Wampers, M., Myin-Germeys, I., & Viechtbauer, W. (2019). Response compliance and predictors thereof in studies using the experience sampling method. Psychological assessment, 31(2), 226.

Trull, T. J., & Ebner-Priemer, U. W. (2020). Ambulatory assessment in psychopathology research: A review of recommended reporting guidelines and current practices. Journal of abnormal psychology, 129(1), 56. Jaso, B. A., Kraus, N. I., & Heller, A. S. (2022). Identification of careless responding in ecological momentary assessment research: From posthoc analyses to real-time data monitoring. Psychological Methods, 27(6), 958

Research Brief

R. Ross MacLean, PhD (SAA Communications Committee Chair) reached out to Leonie Schorrlepp, MSc (co-lead author) and Dominique Maciejewski, PhD (senior author) to discuss their article entitled “Utilizing Qualitative Methods to Detect Validity Issues in Clinical Experience Sampling Methodology (ESM)” recently published in Psychological Assessment. You can access the article by clicking here.

R. Ross MacLean: One of the strengths of assessing variables in daily life using ESM has been a perceived advantage for ecological validity. In your manuscript, you explore how researchers may be taking this for granted. Was there a situation, finding, or personal experience that sparked your interest in exploring validity issues in ESM?

Dominique Maciejewski: Yes! I think all authors of this article have had an experience like that. For instance, Dr Marie Stadel, the other first author of this article, studied the combination of ESM with social networks in her PhD and realized how much we missed in ESM studies using the typical checkboxes (e.g., on location and company). For myself, it was an ESM study, I was conducting with my colleagues Dr Merlijn Olthof and Andrea Bunge at the Radboud University in Nijmegen (the Netherlands). During the study, we asked participants to take part in a 60-day ESM study and when the study ended, we invited participants to show them their data. And what struck me most during those sessions was how much participants differed from each other in what the numbers meant to them. For instance, we asked participants to rate their overall mood five times per day on a scale from 0 to 100. And a “50” on that scale really did not seem to mean the same thing across participants. I was always a heavily quantitatively oriented person that implicitly assumed that the numbers directly reflect psychological states, but these conversations made me question what the numbers actually mean. This sparked my interest in response processes in ESM studies (i.e., how people interpret items and scales, and how they decide on their scores).

Leonie Schoeelepp: For me, this was while doing research for my Master thesis which consisted of multiple case studies combining daily diaries and semi-structured interviews. In the final interview, one participant was searching the visualization of their data for one item, because they had a very intense experience that deeply changed the meaning of that item. However, the change was not visible at all, but in conversation it became clear how meaningful it was. I don’t think that this does much to the ecological validity of ESM. That remains as is. But this pointed me to how taking the numbers for granted can lead to missing what is actually going on and if you are interested in what is actually going on, then not being able to draw conclusions about that becomes a massive problem.

Manuscript authors Leonie Schorrlepp (left) and Dominique Maciejewski (right)

RM: Qualitative research can play an important role in clarifying how an individual participant is interpreting a measure. It is safe to say that most PhD programs place a heavy emphasis on quantitative skills in the conduct of research. What would you say to the researcher that is worried about incorporating qualitative methods into their research program?

DM: I understand the hesitation. I think many of us, myself included, were primarily trained in quantitative methods. But, adopting qualitative methods can have a tremendous value in further contextualizing the quantitative approaches that we typically use in ESM.

Moreover, there is no such thing as “the” qualitative method. In the paper, we outline different methods that differ in how time-intensive they are. Even relatively small additions, like adding some possibilities for participants to reflect on the study, teach you a lot about the data that you did not know beforehand.

LS: I was lucky enough to have several courses on qualitative methods during my psychology Bachelor at the University of Glasgow, so they have always had the same value as quantitative methods in my education. But if you didn’t have that, I’d say, go find some qualitative friends! Starting qualitative research on your own is probably going to be very overwhelming, and there will be nuances and considerations that are unfamiliar, so having someone to support you in spotting these will be helpful. If you don’t know any qualitative researchers, check whether there is a qualitative research network at your university or specifically approach different departments and disciplines for support (e.g., anthropology, sociology, or some humanities might have more qualitative researchers).

RM: In the article you describe validity as a way to check whether participants are viewing the content in the same way as the researchers. As such, language and word choice are critical decisions to establish validity. How can shared decision-making between researchers and participants help increase validity in ESM studies?

LS: Validity is what you’re trying to argue for when checking in with participants and whether those checks are even relevant validity evidence depends on your position regarding your phenomenon of interest. I would guess that most ESM researchers do want their items to reflect something very particular. In addition to this, I would say that the choice for self-report also tends to suggest that you think that participants have a relevant understanding of what you’re interested in. So, if this applies, I would say that inviting participants into the item development process, can help your argument for validity. However, I can also imagine that a researcher might have good reasons for a very particular wording. In this case, participants’ input would probably do more harm than good. Instead, you might have to carefully train and include checks to control that participants share your understanding of your item wording.

DM: Involving participants from the beginning can help a great deal. Precise item formulation is important to guide participants in how to answer our questions. However, only by involving participants can you check whether your intended meaning aligns with the actual meaning by the participants. Including participants in the co-development and piloting of items helps to detect such ambiguities early on. This is really an iterative and bidirectional process: as a researcher you have the theoretical and methodological knowledge, but the participant has the lived experience that you want to capture.

RM: Interpretation of measure items at the between-person level is a known problem in cross-sectional (i.e., non-ESM designs). How do concerns about between-person validity differ when a measure is given at a single time point versus repeatedly in an ESM survey?

DM: Systematic between-person differences (e.g., in scale interpretation) are already problematic in cross-sectional research, but at least they stay consistent because there is only one time-point. But these differences become more complex in longitudinal designs, because between-person differences in item and scale interpretation may change across time due to the repeated nature of ESM. For instance, what a “50” means to participants may already differ at the start, but then for some participants a further recalibration could take place – and for others not. This makes it more complex, because the measurement itself may not be stable across time, and this may not be the same for everyone.

LS: I don’t think there is a difference. If it’s important for my interpretation that my participants all share an understanding of items and [if] they don’t have this, then the problem doesn’t change whether this happens at one or at multiple timepoints. The additional timepoints just add the extra spice that there might be within-person changes, too, and within-person changes might create different between-person differences at different timepoints. But the problem is the same. As a consequence, the ways to detect interpretation differences that we suggest in this article (e.g., cognitive interviewing) are already long recommended for traditional questionnaire development (American Educational Research Association, et al., 2014). We just want to encourage researchers to adopt them for ESM items and extend their use over the course of a study, rather than just in development.

RM: I imagine many of The Signal readers are interested in changes in within-person responses over time. You provided multiple examples of within-person changes that could affect validity. What do you think is the most significant threat to within-person validity in ESM surveys?

DM: If I had to choose, I would say that both changes in concept interpretation and scale interpretation are significant threats, particularly if these changes go unnoticed by the researcher. If my participant suddenly rates another concept, because their interpretation of it changed or because they interpret the scale differently, and I do not know about that as a researcher, then observed changes may no longer reflect true psychological change, which biases conclusions.

However, we still do not know a great deal about such within-person changes in the context of ESM studies. In the article, we give some examples, but we need more systematic research to address this. One study, that we have conducted, which we also reference in the article, aimed to investigate exactly that (Schorrlepp et al., 2025). During the study (28 days, 5x/day), we asked participants at each beep to reflect on their response processes. We have coded more than 4,000 responses now and will hopefully soon be able to answer the question whether people stay consistent and how this differs between people!

LS: The biggest threat is probably ignoring that such changes may occur. Whenever we present this topic or simply talk to people about it, it seems to resonate. Either it connects to a gut feeling that someone already had or they actually heard from their participants in person or in some feedback form that they had different or changing concept or scale interpretations. Neither gut feeling nor anecdotes should be taken lightly, there probably is a problem and ignoring it will just lead to less meaningful research, and that is just such a pity if you’ve already put much work in and really care about the subject.

RM: Problems associated with desirability or positive impression management have long been considered a confound in survey research and assessment. As ESM approaches become more common in clinical trial research, what are important considerations to effectively address possible problems with study interpretation in clinical samples?

DM: I would say that qualitative methods can provide an additional tool to enhance validity by better understanding how participants respond to the study. Participants often use questionnaires as a communication tool. For instance, they may score worse to stay in treatment or score better to please the researcher (see work by Femke Truijens, like this one: Truijens et al., 2023). This can obviously bias clinical trial outcomes. If you provide some less restrictive ways than questionnaires with their typically closed formats, patients may not feel the need to adjust their numerical answers. In the article, we provide some examples of how this can be done, either in the beginning or end with semi-structured interviews or using open text-boxes or voice recordings during the study.

LS: With a clinical sample, it is probably very important to find the balance between research goals and researcher responsibility. I would hope that approaching this with care and input from experts (clinical and by-experience) would set people on the right path for balancing those. Like Dominique said, Dr Femke Truijens and colleagues do some very nice work on this.

RM: Your manuscript provides multiple options to incorporate methodology that can help inform validity of ESM survey items. As you note, many of these techniques or approaches are not often used in ESM studies. While researchers should select approaches that best match their study or population, what is a single thing that you believe every ESM study can do to address measure validity?

DM: First, I’d like to highlight that many researchers already collect a lot of qualitative information, but they often do that informally. What is lacking is that they do that in a systematic and transparent way and that they share qualitative information with other researchers. Like Leonie mentioned earlier, I also had it happen so often that I talked to other researchers who told me super interesting things they learned by talking to their participants. And then I often think, if we made this process transparent and reported the results, we could collectively learn much more about how our measures function in practice.

Second, if I had to choose one single thing that every ESM study can do, it would be to give participants the opportunity to reflect on their scores, for instance with open textboxes (e.g., at the end of the day or end of the study). Very often, we may learn things we did not know before. For instance, in the study I mentioned in the beginning, where we followed participants using ESM and interviewed them afterwards, there was a participant that did not recognize herself in the data we showed to her. While the quantitative data showed that she got more stable towards the end of a study with an overall higher mean in affect, she told us that the way in which she scored, not the actual level, was indicative of her affect. When she felt less well, she became more stable in scoring, and that stability was, according to her, indicative of more negative affect. Without this information, we could have falsely interpreted the increased stability around a higher mean as a transition towards a more positive state (Olthof et al., 2024).

LS: The single thing we can all do to address the problems that we point out is to thoroughly conceptualize the phenomenon of interest and follow through on all the consequences that brings for measurement and its interpretation. Should your concept, for example, be interpreted by everyone in exactly the same way? Then you need to check that this is the case and potentially correct for it. Could your concept mean different things for different people at different points in time? Then you should probably still check how this is the case and adjust your conclusions to match this variation, for example, by not making strong claims about the content as you personally think it is. I think that having a thorough conceptualization at the start of our research helps with holding us accountable when we get excited later in the process.

RM: The file drawer effect (where aspects of a study go unpublished) may be a concern for using these mixed methods approaches. A lack of familiarity with qualitative methods may increase the likelihood that ESM validity data will not be analysed or published. How can our field ensure that important information about ESM measure validity is shared with the field so others can benefit from these important findings?

DM: Yes, I think that is a big issue and also what a recent study has found (Bringmann et al., 2026). We often collect qualitative information (e.g., open text-boxes), but then we do not analyse them, because we do not know how. That is what we were trying to nudge with this paper: to give an overview of how to use qualitative methods to study and improve the validity of ESM data. In the supplementary material, we have also a list of resources that can help you get started, such as a pre-registration template for qualitative research, guidelines on how to de-identify data, and different software for analyses. One of the resources I want to highlight here is the qualitative coding app that was developed by Dr Marie Stadel and Dr Anna Langener which was super helpful when coding open-text boxes in ESM data (available at https://github.com/AnnaLangener/QualitativeCodingApp; Stadel et al., 2024).

Beyond that, I think we need a cultural shift in how we value measurement work. If this becomes something that is publishable and gets rewarded, then researchers will be more likely to analyse and share that data. So, I hope that it is just a matter of time and that more researchers pick up mixed methods. And if you want to know more, we have formed the “Measurement is the New Black” – consortium (LINK: www.mitnb.org), which focuses on improving the measurement in ESM data. We are currently working on several projects that focus on how to integrate quantitative and qualitative data to improve the validity in ESM studies.

LS: Validity has no place in the file drawer, and if qualitative methods are the way to argue for it, then we must make time and space for this in the research process and the field. From personal experience, I am not too concerned about the field. Whenever I have talked about this, people have been concerned but enthusiastic at the same time. So, interest is present. The skill issue that you mention is, of course, also important. How do you start dealing with text data if you have no experience with it? Once again, find yourself some qualitative friends, use course offers if possible, and if you’re a more senior researcher, help those junior to you in making time and space to learn the necessary skills. And finally, make time for the work. The data that you get from qualitative methods can be rich and complex, and it can take time to get through it. But it is so, so rewarding to engage with. On a research and validity level, of course, but it is also really fun and sometimes surprisingly personal to engage with all the experiences and thoughts that participants share – and they do share so much, it’s just up to us to engage with it.

References cited in the interview:

American Educational Research Association, American Psychological Association, & National Council on Measurement in Education (Eds.). (2014). Standards for educational and psychological testing. American Educational Research Association.

Bringmann, L., Gudmundsdottir, G., Schorrlepp, L., Vogelsmeier, L., De Smet, M., Vrijen, C., van Roekel, E., Fritz, J., Chen, J., Kirtley, O., Eronen, M., Chung, J., Stadel, M., & Hoeman, K. (2026). Beyond numbers: Revisiting the role of open-response data in experience sampling methodology. Pre-Print. https://osf.io/jemrp

Olthof, M., Bunge, A., Maciejewski, D. F., Hasselman, F., & Lichtwarck-Aschoff, A. (2024). Transitions and resilience in ecological momentary assessment: A multiple single-case study. Journal for Person-Oriented Research, 10(2), 89. https://doi.org/10.17505/jpor.2024.27102

Schorrlepp, L., Silan, M. A., & Maciejewski, D. F. (2025). How do people decide how they feel? Response processes in ESM studies. https://osf.io/md9xa/overview . OSF. https://doi.org/10.17605/OSF.IO/MD9XA

Stadel, M., Langener, A., Hoemann, K., & Bringmann, L. (2024). Assessing daily life activities with experience sampling methodology (ESM): Scoring predefined categories or qualitative analysis of open-ended responses? OSF. https://doi.org/10.31234/osf.io/dfkz7

Truijens, F. L., De Smet, M. M., Vandevoorde, M., Desmet, M., & Meganck, R. (2023). What is it like to be the object of research? On meaning making in self-report measurement and validity of data in psychotherapy research. Methods in Psychology, 8, 100118. https://doi.org/10.1016/j.metip.2023.100118

Education Opportunities

If you are involved in or are aware of any education opportunities that may interest SAA members, please reach out to the Communications Committee (R. Ross MacLean at ross[dot]maclean[at]yale[dot]edu). We are happy to highlight SAA member’s training workshops in The Signal and on our social media.

Notifications and Recognition

Submit a “Signal Boost” for an SAA member

The communications committee would like to start a new initiative for SAA members to submit recognition for your or another SAA member’s accomplishments, assistance, and/or achievements. This could be winning a award, getting a promotion, defending a thesis/dissertation, obtaining a grant, helping with an analysis, solving a vexing technical problem, or anything else that deserves recognition. We would love to hear from more members!

To submit a Signal Boost, follow the instructions below:

- Click the icon on the right

- Enter “Signal Boost” in the subject line

- Clearly identify the SAA member that you would like to recognize and write a brief description of why you would like to recognize this individual.

Community Information

Nominations for the SAA Early Career Award

Eligibility

All current SAA members who

- obtained their doctoral degree within the last three years (i.e., in the calendar year 2023 or later; the three-year post-degree eligibility period is extended by one additional year for each child born in this period)

- are still working in academia at the time of application,a re eligible to apply.

Application materials

Applicants are asked to submit:

- One peer-reviewed ambulatory assessment paper published in 2024 or later

(“online first” and final print versions are acceptable) - A motivation letter (maximum 400 words) addressing the following points:

- What is the contribution of your work to the field of ambulatory assessment?

- Why did you select this paper rather than your other published papers?

- What vision does the selected paper substantiate, and where should your research field go in the future?

We understand applicants’ desire to submit flawless motivation letters. However, we strongly encourage personal and carefully thought-out letters that clearly reflect the time and effort invested in preparing the application. With regard to language, we fully recognize that not all members of the Society are native English speakers, and applications will not be evaluated based on linguistic perfection.

Applications should be sent by e-mail (including the article PDF and the motivation letter) to [email protected].

Keynote expectations

The award recipient will be invited to give a keynote presentation in person at the SAA conference. The keynote is expected to go beyond a presentation of the awarded paper. In particular, the keynote should:

- situate the awarded work as part of your line of research within the broader field of ambulatory assessment,

- highlight the conceptual, methodological, or substantive contributions of your research, and

- articulate a forward-looking perspective on future developments and directions in the field.

Submission deadline: 15 April 2026

Notification of decision: Early June 2026

SAA Communication Committee Members

- R. Ross MacLean, Ph.D. (Committee Chair), Yale School of Medicine and VA Connecticut Healthcare System

- Lauren DiPaolo, Ph.D., Corporal Michael J. Crescenz VA Medical Center

- Anne Grünert, Ph.D., RWTH Aachen University

- Haijing Hallenbeck, Ph.D., VA National Center for PTSD and Stanford University Medical Center

- Julia Heckmann-Umhau, M.Sc, Universität Heidelberg

- Laura König, Ph.D., University of Vienna

- Femke Lamers, Ph.D., Amsterdam University Medical Center